Smart Driving Driving Without Marking: Application of Emotion Monitoring in Driving Behavior Recognition and Safety Assistance

With the rapid development of intelligent driving technology, cars are gradually transforming from simple means of transportation to highly intelligent mobile platforms. Drivers' emotional states and behavioral intentions, as important factors affecting driving behavior, are increasingly attracting the attention of researchers. The impact of emotions on driving is often subtle, but its consequences can be serious. For example, anger can lead to risky driving, increasing the risk of accidents, while fatigue or stress can lead to sluggish operation or distraction, endangering driving safety.

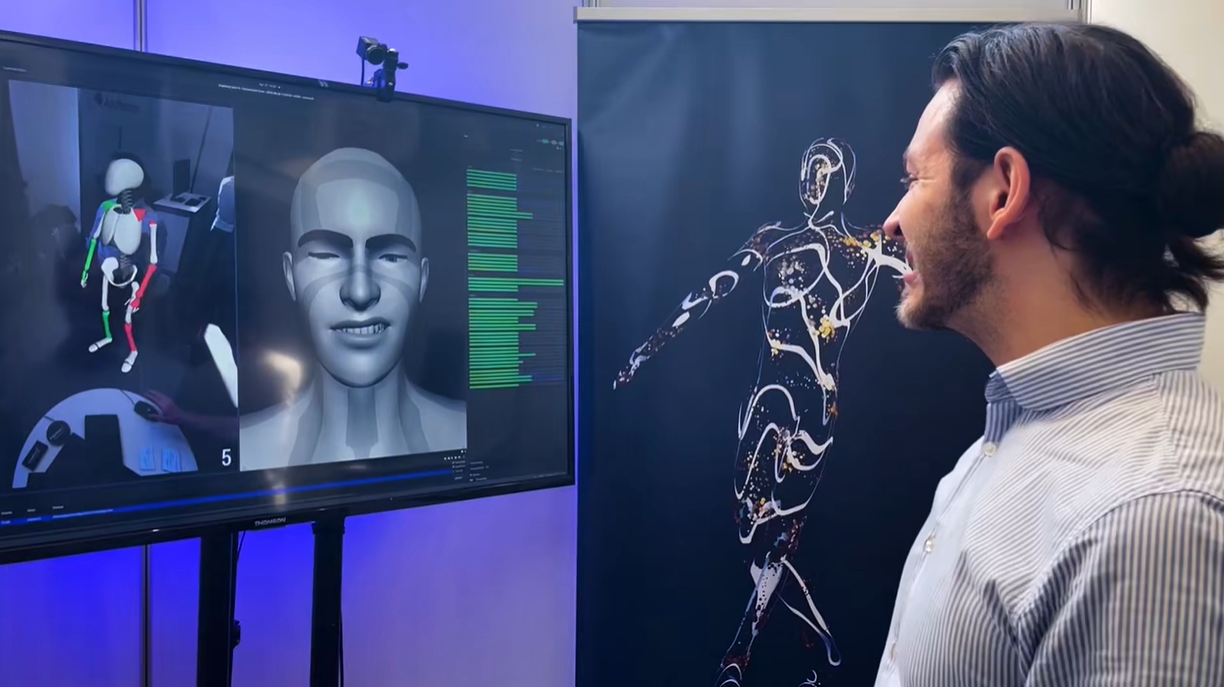

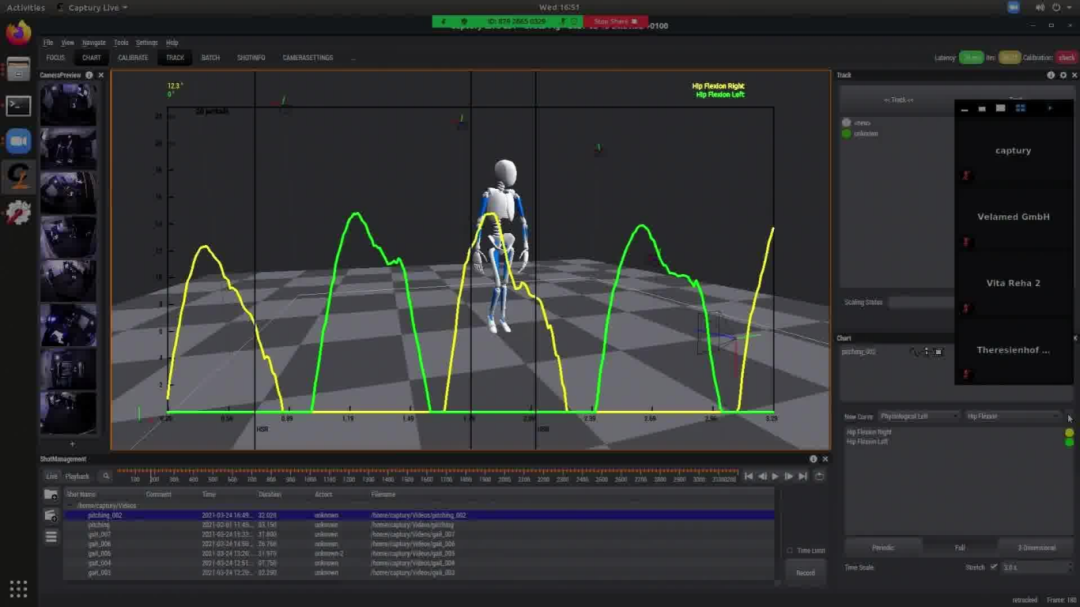

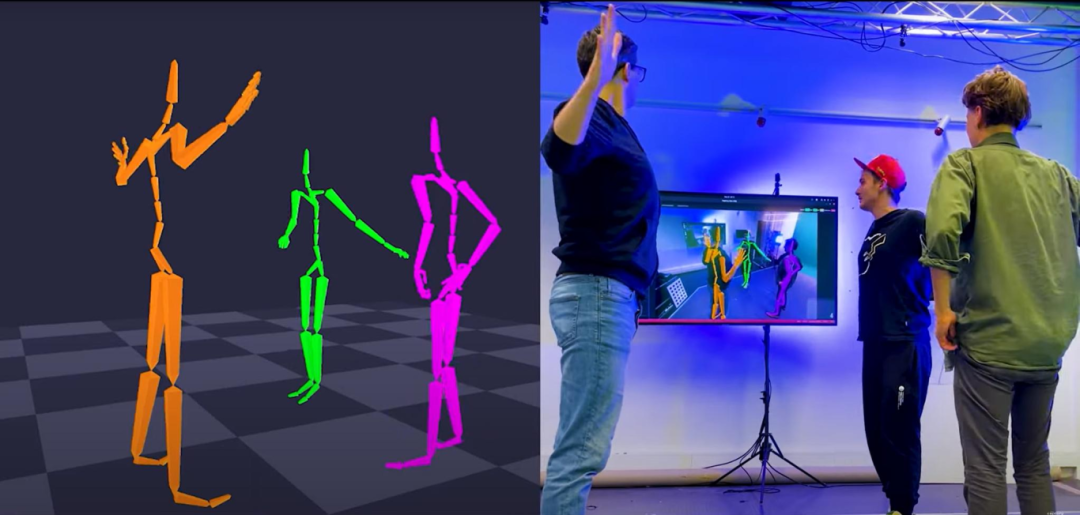

The core of the unmarked motion capture system lies in the fusion of computer vision and artificial intelligence algorithms. Through multi-angle cameras installed in the cockpit, the system is able to capture the driver's face, eyes, lips and upper body movements in real time and generate high-precision feature point data. This data is then processed through deep learning models to extract micro-expression features and behavioral patterns related to emotions. The facial motion coding system is one of the foundations of this technology. It decomposes facial muscle activity into specific action units, such as eyebrow tightening, mouth corner pulling down, etc.

By analyzing these action units, the system can predict the driver's emotional state, such as anger, anxiety, exhaustion, or relaxation.In addition to two-dimensional images, many unmarked systems also introduce depth cameras to generate a three-dimensional face model of the driver. This modeling approach not only improves the accuracy of emotion recognition, but also maintains stability when the driver's face is partially obscured (e.g. wearing glasses, masks). At the same time, combined with time series analysis, the system is able to dynamically capture the trend of mood swings. For example, when the driver gradually transitions from a calm state to an anxious state, the system can make predictions based on the change curve of feature points, so that timely intervention measures can be taken.

Capturylive offers a real-time, marker-free motion capture solution with unparalleled flexibility, making it easy to capture human motion without the need for markers, wearables, or other specialized hardware.

Captury Face tracking solution is free to use any ARKit compatible character for facial performance capture needs. Utilize the power of Apple's 52 hybrid shapes to provide highly accurate activation effects that can easily capture subtle facial expressions. With just a high definition webcam, you can seamlessly capture high fidelity facial expressions without the need for specialized equipment or complex settings.

In addition to supporting tracking with any quality webcam, Captury Face also supports tracking footage from head-mounted cameras. Whether you choose a webcam or a head-mounted camera, accurate tracking and precise motion capture are ensured for realistic and immersive facial animations.

The Captury Face face tracking solution seamlessly integrates into existing workflows, enabling the capture to be streamed directly to leading engines and software. This streamlined process not only saves time and effort, but also enhances the overall workflow by ensuring that facial expressions are instantly visualized and refined in a familiar environment.

The significance of emotion monitoring lies in its deep impact on driving intentions. Drivers' emotional states are closely related to their operating behaviors and judgment decisions. Studies have shown that anger can lead drivers to ignore traffic rules, suddenly accelerate or overtake aggressively, while anxiety can be manifested as excessive caution or even hesitation. Combining unmarked motion capture systems with data analytics software to analyze the correlation between emotions and behaviors in real time can not only identify current driving behavior, but also predict future intentions that may occur.

For example, when the system detects that a driver is exhibiting anxiety and acting abnormally, it can predict that he may make an unsafe lane change in the next few seconds.

The core goal of advanced driver assistance systems is to improve driving safety and comfort, and emotion monitoring gives them a higher level of intelligence. The introduction of markless motion capture systems enables advanced driver assistance systems to shift from "reactive response" to "active perception".

When the driver's mood swings reach a dangerous threshold, such as when anger leads to aggressive driving or fatigue triggers a loss of concentration, ADAS can respond in a timely manner based on mood monitoring data. For example, the system can remind the driver to drive calmly through voice warnings, seat vibrations or visual cues. If the driver's dangerous behavior persists, ADAS can proactively intervene, such as limiting functions such as acceleration, and even take over vehicle control if necessary to avoid potential accidents.

Mood monitoring data can also be used to optimize the in-car environment and driving experience. For example, when the system detects that the driver is anxious or fatigued, it can improve the driver's comfort and help the driver relax by controlling the temperature inside the car, adjusting the lights inside the car or activating functions such as air purification.

In fully autonomous driving mode, driver mood monitoring remains important. For example, when the system detects that a passenger is expressing high levels of anxiety, the autonomous driving strategy can be adjusted to adopt smoother acceleration and braking modes, reducing unnecessary frequent lane changes, thus enhancing passenger trust and comfort.

After an accident, the emotion monitoring data can also be used to trace the driver's behavior patterns, providing a reference for the analysis of the cause of the accident to determine whether the operator made a mistake due to anger or fatigue. In addition, the data can also be used for driver training and advanced driver assistance system algorithm optimization to help drivers understand the impact of their emotions on driving behavior, so as to improve safety awareness.

Although the unlabeled motion capture system has broad application prospects in emotion monitoring, its development still faces some technical and ethical challenges. First, how to ensure the accuracy of emotion recognition in complex lighting environments and face occlusion situations is a technical issue that needs to be solved urgently. In addition, personalized emotion modeling requires larger datasets and more efficient algorithms to cope with individual differences between different drivers. Multimodal fusion is also the direction of future development, such as combining facial expressions with other physiological signals (such as heart rate, skin electrical activity) to provide a more comprehensive emotional assessment.

In terms of ethics and privacy, emotion monitoring involves sensitive personal data and must be designed with strict privacy principles to ensure transparent and secure data collection and use. This includes driver informed consent, data anonymization, and the application of encryption technology in data transmission and storage.

The browser own share function is also very useful~

The browser own share function is also very useful~